By Jocelyn Gecker

When philosophy professor Darren Hick came across another case of cheating in his classroom at Furman University last semester, he posted an update to his followers on social media: “Aaaaand, I’ve caught my second ChatGPT plagiarist.”

Friends and colleagues responded, some with wide-eyed emojis. Others expressed surprise.

“Only 2?! I’ve caught dozens,” said Timothy Main, a writing professor at Conestoga College in Canada. “We’re in full-on crisis mode.”

Practically overnight, ChatGPT and other artificial intelligence chatbots have become the go-to source for cheating in college.

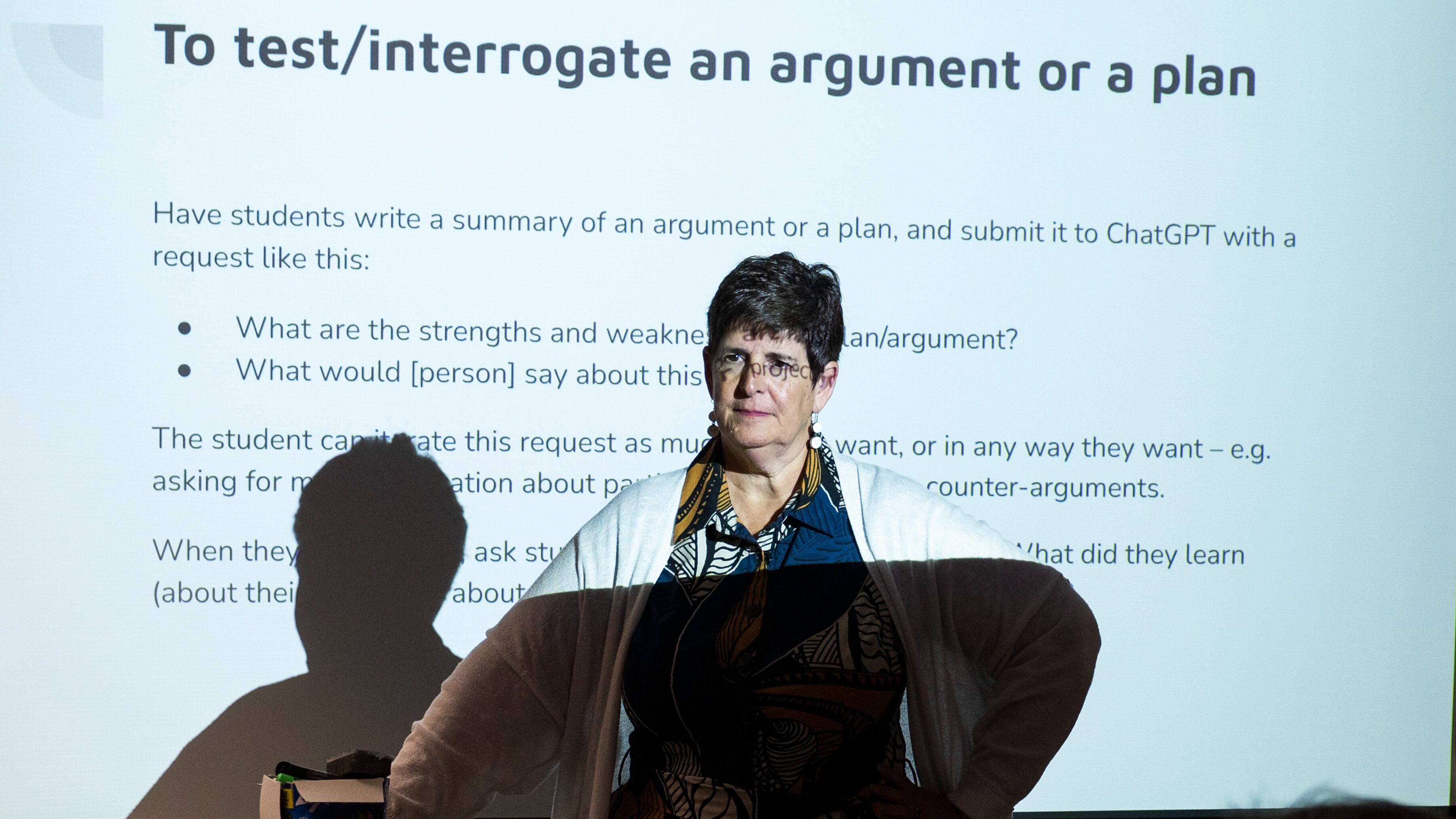

Now, educators are rethinking how they’ll teach courses this fall from Writing 101 to computer science. Educators say they want to embrace the technology’s potential to teach and learn in new ways, but when it comes to assessing students, they see a need to “ChatGPT-proof” test questions and assignments.

For some instructors that means a return to paper exams, after years of digital-only tests. Some professors will be requiring students to show editing history and drafts to prove their thought process. Other instructors are less concerned. Some students have always found ways to cheat, they say, and this is just the latest option.

An explosion of AI-generated chatbots including ChatGPT, which launched in November, has raised new questions for academics dedicated to making sure that students not only can get the right answer, but also understand how to do the work. Educators say there is agreement at least on some of the most pressing challenges.

— Are AI detectors reliable? Not yet, says Stephanie Laggini Fiore, associate vice provost at Temple University. This summer, Fiore was part of a team at Temple that tested the detector used by Turnitin, a popular plagiarism detection service, and found it to be “incredibly inaccurate.” It worked best at confirming human work, she said, but was spotty in identifying chatbot-generated text and least reliable with hybrid work.

— Will students get falsely accused of using artificial intelligence platforms to cheat? Absolutely. In one case last semester, a Texas A&M professor wrongly accused an entire class of using ChatGPT on final assignments. Most of the class was subsequently exonerated.

— So, how can educators be certain if a student has used an AI-powered chatbot dishonestly? It’s nearly impossible unless a student confesses, as both of Hicks’ students did. Unlike old-school plagiarism where text matches the source it is lifted from, AI-generated text is unique each time.

In some cases, the cheating is obvious, says Main, the writing professor, who has had students turn in assignments that were clearly cut-and-paste jobs. “I had answers come in that said, ‘I am just an AI language model, I don’t have an opinion on that,’” he said.

In his first-year required writing class last semester, Main logged 57 academic integrity issues, an explosion of academic dishonesty compared to about eight cases in each of the two prior semesters. AI cheating accounted for about half of them.

This fall, Main and colleagues are overhauling the school’s required freshman writing course. Writing assignments will be more personalized to encourage students to write about their own experiences, opinions and perspectives. All assignments and the course syllabi will have strict rules forbidding the use of artificial intelligence.

College administrators have been encouraging instructors to make the ground rules clear.

Many institutions are leaving the decision to use chatbots or not in the classroom to instructors, said Hiroano Okahana, the head of the Education Futures Lab at the American Council on Education.

At Michigan State University, faculty are being given “a small library of statements” to choose from and modify as they see fit on syllabi, said Bill Hart-Davidson, associate dean in MSU’s College of Arts and Letters who is leading AI workshops for faculty to help shape new assignments and policy.

“Asking students questions like, ‘Tell me in three sentences what is the Krebs cycle in chemistry?’ That’s not going to work anymore, because ChatGPT will spit out a perfectly fine answer to that question,” said Hart-Davidson, who suggests asking questions differently. For example, give a description that has errors and ask students to point them out.

Evidence is piling up that chatbots have changed study habits and how students seek information.

Chegg Inc., an online company that offers homework help and has been cited in numerous cheating cases, saw its shares tumble nearly 50% in a single day in May after its CEO Dan Rosensweig warned ChatGPT was hurting its growth. He said students who normally pay for Chegg’s service were now using ChatGPT’s AI platform for free instead.

At Temple this spring, the use of research tools like library databases declined notably following the emergence of chatbots, said Joe Lucia, the university's dean of libraries.

“It seemed like students were seeing this as a quick way of finding information that didn’t require the effort or time that it takes to go to a dedicated resource and work with it,” he said.

Shortcuts like that are a concern partly because chatbots are prone to making things up, a glitch known as “hallucination.” Developers say they are working to make their platforms more reliable but it’s unclear when or if that will happen. Educators also worry about what students lose by skipping steps.

“There is going to be a big shift back to paper-based tests,” said Bonnie MacKellar, a computer science professor at St. John’s University in New York City. The discipline already had a “massive plagiarism problem” with students borrowing computer code from friends or cribbing it from the internet, said MacKellar. She worries intro-level students taking AI shortcuts are cheating themselves out of skills needed for upper-level classes.

“I hear colleagues in humanities courses saying the same thing: It’s back to the blue books,” MacKellar said. In addition to requiring students in her intro courses to handwrite their code, the paper exams will count for a higher percentage of the grade this fall, she said.

Ronan Takizawa, a sophomore at Colorado College, has never heard of a blue book. As a computer science major, that feels to him like going backward, but he agrees it would force students to learn the material. “Most students aren’t disciplined enough to not use ChatGPT,” he said. Paper exams “would really force you to understand and learn the concepts.”

Takizawa said students are at times confused about when it’s OK to use AI and when it’s cheating. Using ChatGPT to help with certain homework like summarizing reading seems no different from going to YouTube or other sites that students have used for years, he said.

Other students say the arrival of ChatGPT has made them paranoid about being accused of cheating when they haven’t.

Arizona State University sophomore Nathan LeVang says he doublechecks all assignments now by running them through an AI detector.

For one 2,000-word essay, the detector flagged certain paragraphs as “22% written by a human, with mostly AI voicing.”

“I was like, ‘That is definitely not true because I just sat here and wrote it word for word,’” LeVang said. But he rewrote those paragraphs anyway. “If it takes me 10 minutes after I write my essay to make sure everything checks out, that’s fine. It’s extra work, but I think that’s the reality we live in.”

The Associated Press education team receives support from the Carnegie Corporation of New York. The AP is solely responsible for all content.